“Big data” is a buzz phrase from the information technology world that is starting to crop up in maritime circles, too. What is it? It depends somewhat on who you talk to (and what they are trying to sell), but basically it is the growing trend of gathering and using more information in a wide range of forms — everything from the verbiage in a company’s emails to fuel injector data from across a shipping fleet.

Analysis of data used to be confined to very standard areas like payroll information. Now, new technologies are not only trying to digest so-called “unstructured data” like those emails or any other kind of data where the structure isn’t apparent, but they also are applying clever techniques, including artificial intelligence (AI), to try to spot patterns that might have been missed. GE’s locomotives and jet engines, for example, are heavily instrumented and produce enormous amounts of data. The new analytical techniques try to comb through it all to find useful needles in an otherwise uninteresting haystack. Airlines and railroads are gradually learning to make use of these techniques to fine-tune their operations, and larger maritime operators are also on board.

“Fleet optimization is a huge opportunity” for big data, said Marty Puranik, CEO and president of Atlantic.net, a cloud-hosting solutions provider based in Orlando, Fla. This could include fleet tracking as well as analysis of turnaround times to see where there might be bottlenecks that are limiting the number of vessels in transit. Likewise, with very large crude carriers (VLCCs) or chartered vessels “where the spot rate can fluctuate wildly, being able to sell excess capacity when prices are high would be a huge benefit,” he said.

Dean Shoultz, founder of MarineCFO in Houma, La., said his company “is all over” this new technology. MarineCFO specializes in vessel optimization, regulatory compliance and risk management.

“Our customers are companies such as ferry operators, and historically it has been complicated and expensive to get vessel-based sensor data and to get it to the cloud for analysis through machine learning or AI,” he said. Now, though, new cloud-based technologies like Microsoft’s Azure IoT Hub are changing the equations.

“These technologies are only limited by the ‘data pipe,’ the amount of information you can actually get to them,” Shoultz said. “Before these technologies, if you had a sensor measuring vibration, you might have just taken some average of the data” and missed out on information that could have signaled a looming problem.

“Historically, this isn’t that novel; companies like Maersk have been doing it for years,” he said. But now, it is feasible for almost anyone to store and move even petabytes of data, and “improving communication bandwidth is in our favor, so this could be doable for ferry or tug operators.”

Shoultz said his company has been delving into the field with its own offering. “We have a data service for preserving sensor information, for example, with relation to an injury or a collision. We have seen cases where someone claimed they had gone in the engine room and got hurt, but sensor data showed the room wasn’t even opened,” he said.

Those sensors present both an opportunity and a huge challenge. Because they are less expensive than ever, vessels could easily have hundreds or even thousands of them, with readings flowing almost continuously on factors such as vibration, temperature and pressure.

One of the best options for meeting the challenge of processing this wealth of information is what IT people have dubbed “edge” computing, where an onboard computer handles the first level of data digestion: summarizing and picking out potentially important data outliers. This yields a more manageable amount of data that can then be sent to the cloud and perhaps combined with data from other vessels. So, for example, vibration data from vessels using identical power plants could more easily reveal symptoms of a problem than analysis of data from just one vessel. Companies like Inmarsat, Orbcomm, Iridium and Thuraya have bluewater-oriented offerings in this area, and terrestrial providers also offer high-capacity communication packages.

|

|

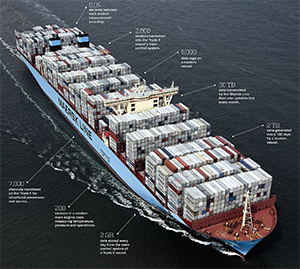

The rise of satellite technology and electronic sensors has led to the generation of massive amounts of data on modern vessels, presenting opportunities to improve efficiencies and cut maintenance costs. Maersk has been among the frontrunners in pursuing the benefits. |

|

Courtesy Maersk/Facebook |

A recent report from Persistence Market Research, an analytics firm based in India, sees the most intensive adoption of big data in the maritime industry occurring first in Europe, where corporations are pursuing all kinds of “digitalization” initiatives with enthusiasm. The report, “Maritime Big Data Market: Global Industry Trend Analysis 2012 to 2017 and Forecast 2017-2025,” notes that most of the maritime-focused big data vendors are based in Europe, including Maritime International Inc., Windward Ltd. and the Big Data Value Association. “Several other companies like Laros Inc. and Ericsson Inc. are also expanding their (offerings) in North America,” according to the report.

“Big data is of important value to large maritime operators, as it allows these cruise ship companies, large tanker and other fleet operators to work in coordination with their agents, ports and other communicating third parties by turning unstructured data into insightful information,” said Nivedita Upadhyay, a consultant at Persistence Market Research. This information can be related to security, the location of a ship, weather forecasts, behavior of ship equipment and other factors.

According to Upadhyay, maritime operators have realized the value of big data and have started entering into partnerships with technology providers in order to implement artificial intelligence.

For instance, she said, Fujitsu entered into a partnership in 2016 with ClassNK, an international ship classification society, to provide a maritime big data platform. The platform collects machine-based data such as engine information and weather information from ships at sea and helps operators forecast machine life and potential malfunctions. This can reduce the risk of system failures and also better align maintenance and repair activities with actual needs.

Smaller maritime operators are also moving toward the adoption of these technologies, but usually at a slower rate. “Only around 8 to 10 percent of smaller maritime operators are using these digitalization or big data technologies within their vessels,” Upadhyay said.

She attributed the slow adoption to the current high cost of implementation, which exceeds the budget for most smaller operators. Another reason is the lack of knowledge of the technologies and their benefits to shipping, she said. However, Upadhyay added that big data could be quite significant for smaller operators too, since it can provide the same kind of advantages it confers on larger operators.

“It allows them to move their vessels on time with real-time navigation, and helps them in emergency cases by providing information with the help of predictive analytics,” she said. Moreover, due to the availability of cloud-based platforms — which typically reduce the need for on-staff expertise and cut costs overall — it is expected that in the near future, smaller operators will adopt big data solutions more easily.

“We can say that smaller operators will definitely go in for cloud-based maritime big data offerings due to less cost and the ease of configuration,” Upadhyay said.

Larger maritime operators also sometimes prefer using cloud-based offerings. For instance, she noted, the classification society DNV GL has launched a big data maritime platform called Veracity. It is designed to provide security, predictive analysis and reduction in machine downtime, and improve real-time communication. “(Veracity) includes familiar Microsoft cloud technology so that more maritime companies can adopt the platform,” she said.

For his part, Puranik of Atlantic.net envisions smaller operators taking advantage of big data technology through a mixture of telemetrics, the cloud and cloud analytics.

Armed with this technology, he said smaller ship operators should be able to capture and analyze data like time in transit and variables that affect delivery quality — for example, the effect of storms compared to flat seas. Tracking of a vessel and comparing run times throughout the year to see if there is variability in cost or seasonality should allow operators “to adjust pricing to more accurately reflect costs,” he said.